Synthesized from 11 source documents across the discovery economics, publisher rebellion, disruptive intermediaries, extraction & reciprocity, and agentic web research lenses. Source-reviewed, fact-reviewed, and gap-reviewed before publication.

A bakery in Portland. She has been in business for fourteen years. Her website is a WordPress theme her nephew set up in 2016. She gets most of her orders from Google — or she did, until the orders started thinning and she couldn’t figure out why. She has never heard of an AI Overview.

A gym in Denver. Two locations, twenty-three employees. They redesigned their site last year, spent real money on it. Their developer told them the SEO was “optimized.” The site is a React single-page application. Every AI crawler that visits sees an empty shell.

A ten-person agency in Toronto. They build sites for businesses like the bakery and the gym. They’ve read about AI disruption. They do not know what to do about it. The advice they find online is written for enterprise clients with six-figure marketing budgets.

These are composites. That is the point. There are no real names to use. The data that would tell their specific stories does not exist.

The tier nobody measured

Conductor’s domain study covers 13,770 websites — enterprise customers. Contentsquare’s benchmark draws from 6,500 sites and 99 billion sessions — large-scale digital properties. The Pew Research behavioral study that produced the most rigorous AI Overview impact measurement — 68,879 searches, no commercial conflicts — tracked user behavior, not business outcomes. Seer Interactive’s conversion rate data comes from a single large organization. Visibility Labs’ e-commerce comparison covers 94 stores but excludes non-commercial content.

Rigorous. Independent. And silent on what happens below the enterprise line.

No study has specifically measured AI referral economics for independent small businesses. State that plainly. It is the single largest limitation of every recommendation in this series, and in every other publication covering this territory. The data describes what is happening to the large. It implies what is happening to the small. It does not measure it.

The closest approach is SOCi’s 2026 Local Visibility Index, which analyzed nearly 350,000 locations across 2,751 multi-location brands and found ChatGPT recommends only 1.2% of local business locations. Gemini reaches 11%. Perplexity, 7.4%. Google’s traditional local 3-pack, 35.9%. SOCi is a local marketing platform with commercial interest in the findings, and the sample covers multi-location enterprise brands, not independent businesses. If enterprise brands with professional marketing teams achieve 1.2% ChatGPT recommendation rates, independents without dedicated marketing resources likely fare worse. But that is inference, not measurement. The measurement does not exist.

Meanwhile, BrightLocal’s 2026 consumer survey (1,002 U.S. adults via SurveyMonkey) found 45% of consumers now use AI tools for local business recommendations, up from 6% the previous year. The leap is dramatic and may partly reflect growing awareness of what counts as “AI tools” rather than purely behavioral change. But even if the real behavioral shift is half that number, it represents a discovery channel where 1.2% of businesses are visible and the gap is not closing.

The compression pattern

In every intermediary disruption the research behind this series examined, the mid-tier was permanently compressed. The pattern is structural. It does not depend on the industry, the technology, or the intentions of the companies involved.

Music. Global recorded music revenues collapsed from $22.2 billion in 1999 to $13.0 billion by 2014 — a 40% decline over fifteen years — before recovering to $31.7 billion by 2025. (IFPI Global Music Report) The aggregate recovery was real. The distribution was not. Nearly 1,500 artists earned more than $1 million from Spotify in 2024. (CNBC, March 2025) In the same year, Spotify stopped paying royalties on any track with fewer than 1,000 streams, demonetizing an estimated 86% of tracks on the platform. (Disc Makers, 2025 — independent analyst calculation, methodology not peer-reviewed) The industry recovered. The middle vanished.

Search. Chartbeat data reported through Axios (March 2026) shows search referral traffic declined 60% for small publishers over two years, compared to 47% for mid-sized publishers and 22% for large publishers. The gradient is consistent: the smaller you are, the more you lose. A travel blog called The Planet D shut down after a 90% traffic decline following the introduction of AI Overviews. Stereogum, a music blog, lost 70% of its ad revenue. (AdExchanger, 2025; NPR, July 2025) These were not small businesses. They were the mid-tier — established enough to depend on search traffic, too small to negotiate with the platforms consuming it.

Transportation. When Uber disrupted the taxi industry, the medallion holders who lost the most were immigrants from Bangladesh, Pakistan, Haiti, and Ghana who had taken on median debts of $500,000 to buy the right to operate. (Documented NY, 2021; Columbia Human Rights Law Review) The technology disrupted an industry. The financial damage landed on the people who had leveraged everything they had into a regulated system that was deregulated out from under them.

The tier below the middle — the one that never appears in the datasets — absorbs whatever terms emerge from fights it was never party to.

The four-tier reality

The blocking debate that dominates industry conversation is a Tier 1 concern applied to a Tier 4 reality.

The research behind this series identified four tiers of publisher economics. Tier 1 — major publishers like the New York Times and News Corp — can block AI crawlers, negotiate licensing deals worth $16 million to $50 million annually, and absorb any traffic loss because licensing revenue compensates. Tier 2 — mid-tier publishers and vertical sites — face the acute squeeze: no licensing leverage, heavy search dependency, 47-55% traffic declines. Tier 3 — independent creators on networks like Raptive’s 6,000+ sites — see no statistically significant traffic change from blocking, and Raptive continues to recommend it as a defensive default.

Tier 4 is small business websites. They are fundamentally different from all three publisher tiers. Small business sites do not generate content that AI companies want to license. They are not targeted by training crawlers in any meaningful volume. Their primary threat is AI Overviews cannibalizing the search traffic that used to reach them — and that is not addressable through robots.txt.

Blocking AI crawlers costs a small business nothing and gains nothing. The fight that the industry is having — whether to block, what to block, how to negotiate from a blocking position — is a fight about a different tier’s problems. The entire conversation is about the wrong people.

The discovery fracture

The fracture runs deeper than traffic loss. The discovery landscape itself is splitting.

Sterling Sky’s analysis of 322 markets found AI local packs surface 68% fewer unique businesses than traditional local packs — 5,943 unique businesses versus 18,330 in standard results. Fewer businesses visible. The same businesses visible more often. The call buttons that let a customer tap to phone a business directly from search results are removed in AI local packs, pushing users toward additional interaction steps. Visibility narrows. Friction increases.

The platforms are not even looking at the same web. Ahrefs analyzed 15,000 long-tail queries and found only 12% URL overlap between AI-cited sources and Google’s traditional top 10 results. What ranks in Google does not predict what gets cited by ChatGPT. What gets cited by ChatGPT does not predict what Perplexity surfaces. Each AI platform draws from a different pool — ChatGPT pulling heavily from Yelp, Foursquare, and TripAdvisor; Gemini grounded in Google Maps; Perplexity favoring niche directories like Zocdoc, Angi, and Houzz. (BrightLocal, 2025) The fragmented discovery landscape means a small business cannot optimize for “AI search” the way it once optimized for Google. There is no single surface to appear on.

For service businesses — bakeries, dentists, accountants — the platform path that e-commerce can walk does not exist. Shopify merchants got agentic commerce by default in March 2026 when Agentic Storefronts rolled out through the Universal Commerce Protocol. WordPress released an MCP Adapter. Stripe’s Agentic Commerce Suite covers Squarespace, WooCommerce, BigCommerce. Platform-mediated agent access is closing the commerce gap for businesses that sell products.

But the local bakery does not sell products through Shopify. Her “agent-actionable” need is online ordering, catering inquiries, and custom cake requests. Whether existing scheduling platforms — Calendly, Acuity, Mindbody, Square Appointments — are building agent-accessible interfaces is an open question the research could not answer. The service business gap is the least addressed by current platform-mediated solutions and the most relevant to the audience this project is written for.

The platform path is also a dependency path. A merchant whose agent-accessibility exists only through Shopify’s infrastructure is more locked in than before. If Shopify changes pricing, terms, or agent infrastructure priorities, businesses built on that infrastructure have no fallback. The gap-closing mechanism is also a lock-in mechanism — a dynamic the dependency trap research behind this series documents across every intermediary disruption examined.

The resolution that never reaches them

Every extraction conflict produces a resolution. Every resolution is tiered.

The research examined five major extraction conflicts and their outcomes. OPEC — five nations, one non-substitutable commodity — achieved producer sovereignty and pricing control. The music industry — three major labels controlling roughly 70% of the market — recovered aggregate revenue through Spotify licensing, with gains concentrated among the majors. Google and publishers — millions of fragmented sites — produced a patchwork of deals that pay large publishers and leave small publishers with nothing. When Facebook was forced to negotiate with Canadian publishers under the Online News Act, it walked away entirely. Canadian publishers lost 85% of engagement on Meta platforms. Meta suffered no measurable user attrition.

The structural predictor is concentration. Concentrated producers get the strongest terms. Fragmented producers get the weakest.

Five documented resolution mechanisms are emerging for the AI-web conflict: bilateral licensing (publisher-level deals with AI companies), infrastructure-mediated licensing (Cloudflare’s Pay Per Crawl), revenue-sharing (ProRata’s attribution-based model), regulatory mandated bargaining (Australia’s News Media Bargaining Code), and proposed statutory licensing (compulsory rates set by government). Small businesses fall outside all five. They have no content to license bilaterally. They have no crawl traffic to monetize through infrastructure marketplaces. They have no volume to generate meaningful revenue-sharing. They have no collective bargaining leverage. And no statutory licensing framework has been implemented.

There is one structural advantage the research identifies. Service businesses — bakeries, dentists, accountants — retain leverage through retrieval dependency. AI systems need fresh local data. Hours, availability, pricing, menus, service areas. This information cannot be pre-trained or cached. An AI agent that tells a customer a bakery has sourdough available when the morning batch sold out by ten is worse than no agent at all. The leverage is not in the content. It is in the freshness. Service businesses own something that must be fetched live, and that ongoing dependency survives regardless of how the extraction wars resolve.

The second lever is infrastructure choice. For businesses that cannot evaluate the implications of their hosting provider, the developer or agency that makes that choice on their behalf is making the most consequential infrastructure decision of the next decade. The CMS default is the policy. The platform choice is the policy choice. Cloudflare, which also authored the Web Bot Auth IETF draft and operates the dominant CDN-level verification infrastructure, runs block-by-default for AI bot traffic — a decision that affected roughly 20% of the public web in a single day. The binary cost structure tells you who this serves: Web Bot Auth verification is available on Cloudflare’s free plan. Custom MCP server deployment runs $25,000 and up (vendor agencies selling those services; treat as indicative, not authoritative). The defaults are the only policy most small businesses will ever have.

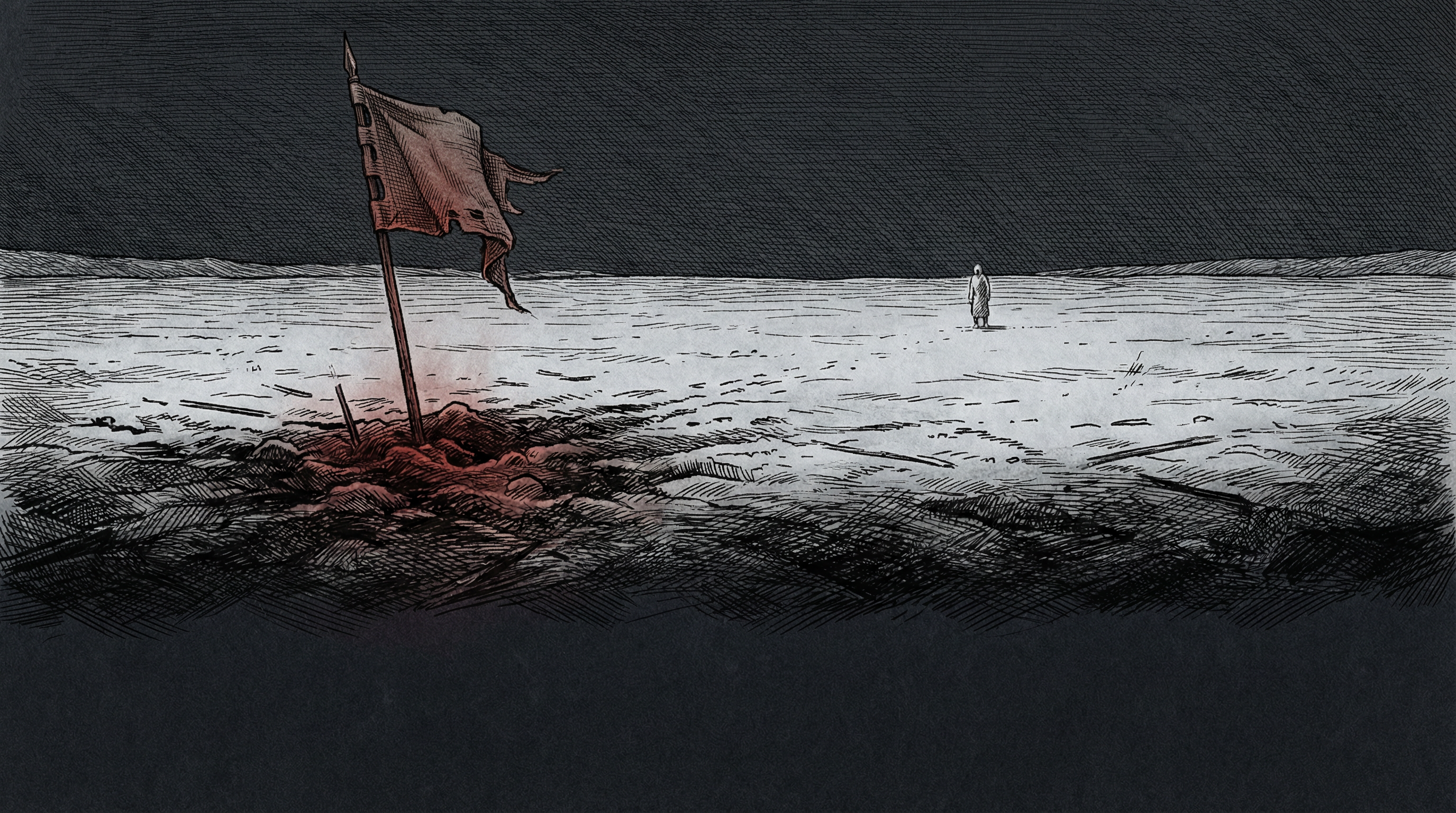

Towton

Palm Sunday, 1461. Somewhere between twenty and thirty thousand men lie dead in the snow outside a village in Yorkshire. The Yorkist army, led by the nineteen-year-old Edward IV, had fought the Lancastrians in a blinding snowstorm for ten hours — the bloodiest single day in English history. The Yorkists won decisively. The Lancastrian king fled. The crown changed hands.

And it did not matter.

Twenty-four more years of fighting. Two more changes of dynasty. The House of Lancaster, defeated so thoroughly at Towton that its cause seemed finished, produced through Margaret Beaufort’s son a claimant who had spent fourteen years in exile — not the strongest, not the most connected, not the one anyone would have chosen. Henry Tudor landed at Milford Haven in 1485 with a borrowed army and won a crown that the side that had won at Towton could not hold.

The side that lost the defining battle won the war by surviving it.

The bakery in Portland does not need to win the extraction war. She does not need to negotiate licensing terms with OpenAI, implement a custom MCP server, or hire a GEO consultant. She needs her website to render server-side so AI crawlers can read it. She needs her business name, address, phone number, and menu in structured data that machines can parse. She needs her hours to be accurate, her reviews to be present, and her directory listings to be consistent across the platforms AI systems actually consult. She needs infrastructure that enforces reasonable defaults on her behalf, because she will never read an IETF draft or evaluate a trust protocol.

She needs to survive this. The structural readiness that makes survival possible is documented in this series — the semantic HTML, the Schema.org discipline, the rendering fundamentals that have outlasted every intermediary transition in the record. None of it is new. None of it is exciting. None of it is what anyone is selling.

For those who build for her — the developers, the agencies, the ten-person shop in Toronto — the work is not optimization. It is fortification. Clean infrastructure. Structured data. Foundations that do not depend on any single platform’s continued goodwill. The forms are old. The stakes are new.

Survival first, slay second.

If you build for this tier — or you are this tier — The Quartermaster has your weapon. Describe your stack. Get your specific implementation.

The extraction data behind this piece is in The Plunder. The historical pattern that predicts the compression is in Trapped Twice. The disciplines that survive every cycle are in Empty Titles. The fog ahead is mapped in The Snowstorm.