The Three-Tier Taxonomy

Voice features decompose into three tiers of systematizability — and every design decision in a creative AI pipeline flows from which tier you're addressing.

Structured from 41 research documents across 3 domains and 9 analytical lenses. Source-attributed, confidence-tiered.

Brandon Sanderson spent eighteen months inside Robert Jordan’s notes before he wrote a single word of Wheel of Time prose. He read Jordan’s unpublished drafts, his worldbuilding files, his margin comments — the accumulated geology of a mind that had spent two decades inside one fictional universe. When Sanderson finally wrote, he did not try to mimic Jordan’s sentence patterns. He wrote in his own style, transparently, and the books worked. Readers accepted them. Scholars accepted them. Jordan’s widow, who had chosen Sanderson from among the candidates, accepted them. The surface was visibly different. The depth was right.

The Dune prequels took the opposite approach. Brian Herbert and Kevin J. Anderson kept the desert planet, the political factions, the spice, the sandworms — every surface element that made Dune recognizable as Dune. What they did not keep was Frank Herbert’s philosophical density, his narrative patience, his willingness to let a scene sit in ambiguity rather than resolve into action. The prequels read like Dune. They do not think like Dune. Readers noticed. Thirty years later, the gap between the original novels and the continuations remains one of the most discussed cases of franchise inheritance in science fiction.

The difference between those two outcomes — Sanderson’s success through depth, the Dune prequels’ failure through surface — is not a literary curiosity. It is the architectural blueprint for every decision in a creative AI pipeline. And it happens to be a finding that this project’s research arrived at from three independent directions.

The Finding That Organized Everything

The Golden Key research project studied 41 documents across three domains — agent engineering, creative intelligence, and implementation strategy — through nine analytical lenses. The goal was to understand what it takes to build a multi-agent pipeline that produces prose faithful to a specific author’s voice. The synthesis surfaced many findings, but one organized all the others.

Voice features decompose into three tiers of systematizability.

That sentence sounds like a taxonomy someone invented to sort things into boxes. It is not. It is an empirical finding that emerged from computational stylistics, cognitive science, literary history, and agent engineering research — four independent evidence streams converging on the same structure. The taxonomy was not designed. It was discovered, and then everything else was designed around it.

Tier 1: Reliably systematizable. Vocabulary profiles, function word frequencies, sentence length distributions, punctuation patterns, register markers. These are the surface features of a writer’s voice — the elements that computational stylistics has been measuring since Burrows introduced Delta analysis in the 1980s. They are countable, comparable, and reproducible. An LLM given a well-specified prompt can hit target sentence-length distributions and function word ratios with reasonable accuracy. A stylometric tool can verify the output against a baseline corpus. Tier 1 is where automation works.

Tier 2: Partially systematizable. Imagery systems, thematic preoccupation, paragraph rhythm, emotional register, narrative pacing within scenes. These are structural features — patterns that emerge across paragraphs and pages rather than within sentences. An LLM can generate them, but not reliably, and not consistently over long stretches of text. They require agent generation with human review: the machine produces candidate material, and a human evaluates whether the imagery system is coherent, whether the thematic density matches the target, whether the paragraph rhythm varies enough to avoid monotony. Tier 2 is where collaboration works.

Tier 3: Resistant to systematization. Worldview integration, narrative timing, pattern-breaking, the purposeful deployment of variation itself. These are the dimensions that readers and critics actually weight most heavily when they assess whether a continuation or pastiche captures an author’s spirit. They are the elements that distinguish a living voice from a statistical composite. No current LLM reproduces them reliably, and the evidence suggests that this is not merely a capability gap that next-generation models will close — it reflects something structural about what these dimensions are. Tier 3 is where human direction is not optional but architectural.

The taxonomy maps directly onto the Sanderson/Dune comparison. Sanderson worked at Tier 3 — worldview, character interiority, the deep logic of Jordan’s narrative universe — and let Tier 1 diverge. The Dune prequels worked at Tier 1 — surface genre conventions, proper nouns, setting details — and let Tier 3 diverge. The results track the prediction exactly.

The Evidence Base

The taxonomy is not a framework imposed on the data. It is the structure the data revealed. The evidence comes from multiple independent sources, and the convergence is what gives the finding its weight.

Detection accuracy. Kirilloff et al. (2025), published in the Harvard Data Science Review, found that expert human judges distinguish AI-generated text from authentic 19th-century prose with 96.4% accuracy. The study tested GPT-4 imitations of specific authors — precisely the task this pipeline attempts. A 96.4% detection rate means that even frontier models, given the author’s text as reference, produce output that trained readers can identify as synthetic almost every time. The surface is close. Something deeper is not.

There is a notable exception. Mark Twain’s voice proved harder to detect — 62% accuracy versus the 96.4% average. The researchers attributed this to Twain’s distinctive, relatively simple prose style and the abundance of his work in training data. The Twain exception is evidence that the systematizability boundary is not uniform. Some voices sit closer to the edge. But the 96.4% baseline across 19th-century authors establishes that for most distinctive literary voices, the gap between surface reproduction and deep faithfulness remains large.

Variability deficit. Wenger and Kenett (2025) measured the semantic diversity of LLM-generated text against human writing and found that LLM populations exhibit 19-38% less variability across three independent creativity measures. This is the homogeneity problem quantified: LLMs converge toward the statistical center of their training distributions, producing text that is simultaneously competent and uniform. The variability gap is not a quality problem in the conventional sense — the text is grammatical, coherent, often elegant. It is a life problem. The text lacks the purposeful irregularity that human writers deploy unconsciously: the sentence that breaks a rhythm, the paragraph that withholds where the reader expects expansion, the image that arrives from outside the pattern.

The same study found sentiment inflation — AI-generated text averaged +0.82 on sentiment measures where human text averaged -0.12. LLMs default to warmth, resolution, positive affect. For an author like MacDonald, whose theological vision includes genuine darkness, genuine loss, and grace that arrives through suffering rather than comfort, sentiment inflation is not a minor calibration issue. It is a structural threat to faithfulness.

Structural convergence. Mazur (2025) analyzed flash fiction across 29 different language models and found near-universal convergence on specific narrative conventions: linear chronology, close-third-person point of view, positive endings. These are not the conventions of any particular author. They are the conventions of LLM-generated fiction as a category — the statistical center that all models drift toward regardless of prompting. For a pipeline attempting to reproduce MacDonald’s intrusive first-person narrator, his non-linear fairy-tale logic, and his endings that resolve through mystery rather than closure, the structural convergence finding means that the default output of any generation step will actively work against the target voice at the structural level.

Cognitive science. The three-tier structure is not only an empirical observation about LLM output. It maps onto established findings in cognitive science about how humans acquire and reproduce style. Saffran et al. (1996) demonstrated that style acquisition is fundamentally a process of implicit statistical learning — the same mechanism by which infants segment speech operates when readers absorb an author’s patterns through sustained exposure. Bock and Griffin (2000) showed that syntactic priming operates automatically and unconsciously. Polanyi’s (1966) work on tacit knowledge provides the theoretical grounding for why the deepest dimensions of voice resist formalization: they are known through practice, demonstrated through performance, but not fully articulable as rules.

LLM training through next-token prediction is structurally analogous to Saffran’s statistical learning. This is why Tier 1 works — the same mechanism that captures surface patterns in human learning also captures them in LLM training. But human style acquisition also involves embodiment, developmental trajectory, aesthetic response, and the capacity for intentional variation. These are the capacities that Tier 3 depends on, and they are structurally absent from statistical language models. The taxonomy is grounded not only in what LLMs produce but in why they produce it that way.

The Faithfulness Hierarchy

The three-tier taxonomy describes which voice features can be automated. A second finding — the faithfulness hierarchy — describes which voice features matter most to readers.

The hierarchy emerged from the psychology of mimicry research, validated by literary history:

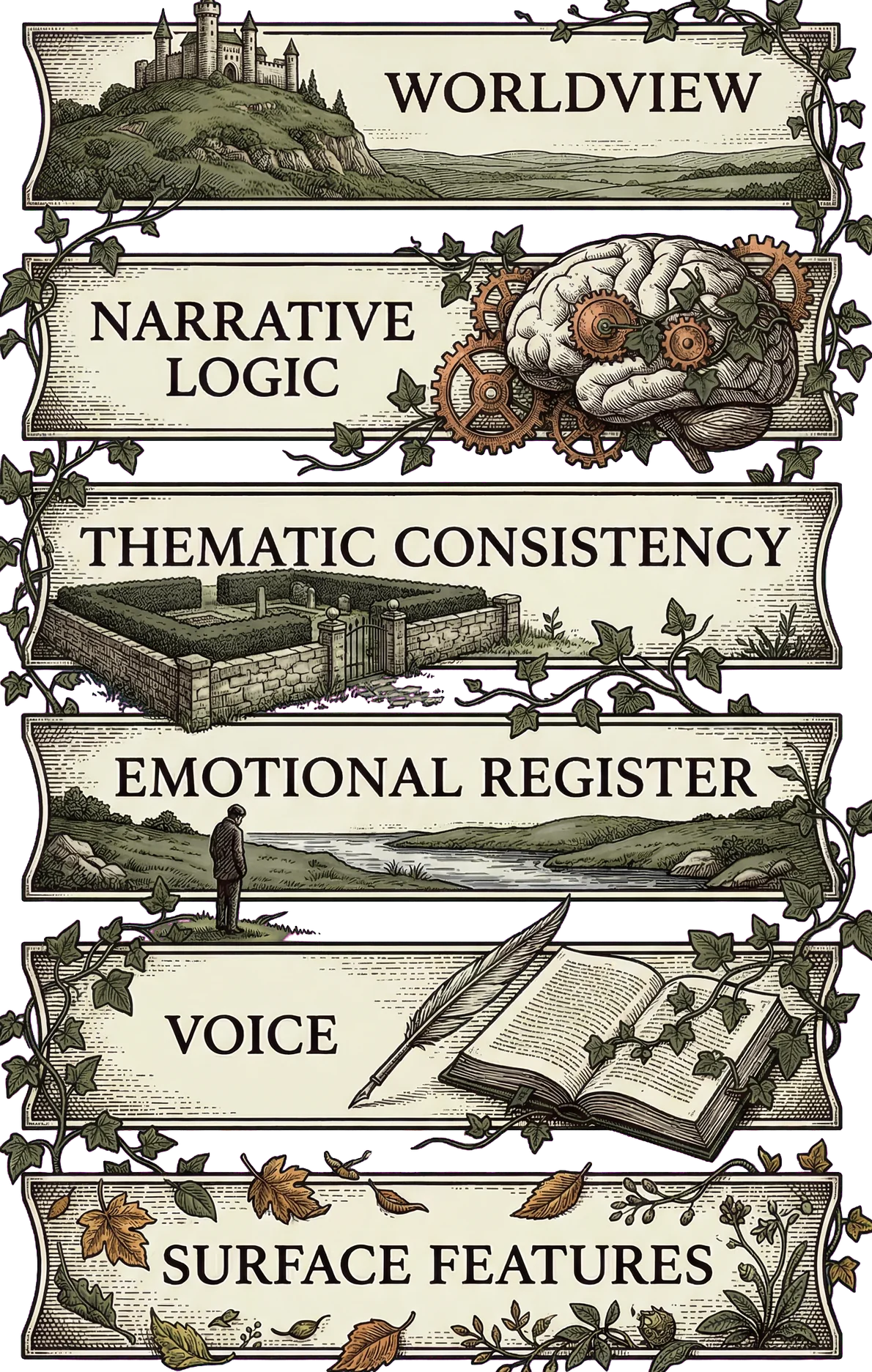

Worldview > narrative logic > thematic consistency > emotional register > voice > surface features.

The ordering is a design heuristic, not an empirically tested reader-preference ranking. No study has asked readers to weight these dimensions against each other in a controlled setting. But the historical evidence is consistent. Sanderson’s Wheel of Time completion prioritized character interiority and narrative logic over surface style matching — and succeeded. The Dune prequels preserved surface genre conventions while replacing philosophical density with action melodrama — and failed. The Austen pastiche tradition, which spans over 300 published works, shows readers forgiving surface deviations when the narrative world feels right but rejecting stylistically polished surfaces that feel hollow.

The hierarchy inverts the naive approach to creative AI. The instinct — build a style-matching system, get the vocabulary and sentence structure right, and the rest will follow — is precisely backward. The dimensions that are easiest to automate (Tier 1 surface features) are the dimensions that matter least to readers. The dimensions that matter most (Tier 3 worldview integration) are the dimensions that resist automation most stubbornly.

For MacDonald specifically, worldview is not an abstraction. His theological vision — grace as ambient condition rather than interventional reward, transformation through suffering and surrender rather than through achievement, divine presence woven into the physics of the fictional world rather than announced through doctrine — is the architecture of his fiction. A passage can match MacDonald’s sentence-length distribution, his function word ratios, his preference for semicolons, and still be fundamentally unfaithful if the theological substrate is wrong. Conversely, a passage that captures MacDonald’s vision of grace — that the garden does not reward good behavior but simply operates by its own laws, that the old woman’s authority is established through what she does rather than what she says — can diverge from his surface patterns and still feel authentically his.

The pipeline’s evaluator weights reflect this hierarchy directly. When the evaluation rubric scores a passage, Worldview carries a weight of 6. Surface Features carries a weight of 1. The ratio is the faithfulness hierarchy made operational: a passage that scores 5 on Worldview and 3 on Surface Features is rated higher than a passage that scores 5 on Surface Features and 3 on Worldview. This is a deliberate architectural commitment, not a default.

The Homogeneity Problem as Design Constraint

The 19-38% variability gap is not a quality problem to be addressed after generation. It is a design constraint that must shape the pipeline from the start.

The homogeneity problem manifests at two scales simultaneously. At the micro level, LLM-generated prose lacks purposeful sentence-to-sentence variation — the text settles into rhythmic patterns that, while individually well-crafted, become predictable over sustained reading. At the macro level, the same convergence toward statistical center means that across multiple generation runs, the output gravitates toward similar narrative structures, similar emotional arcs, similar resolutions. Both scales were documented across multiple independent studies with large effect sizes: Wenger and Kenett (2025) on semantic diversity, Kirilloff et al. (2025) on stylistic distinctiveness, Mazur (2025) on narrative structural convergence, Doshi and Hauser (2024) on creative text homogeneity.

For a MacDonald pastiche, the homogeneity problem is particularly acute. MacDonald’s narrative voice is defined by controlled variation — long, philosophically dense paragraphs followed by short declarative sentences; extended narrator digressions followed by stretches of clean narrative without commentary; passages of theological gravity interrupted by moments of dry wit or domestic practicality. The pipeline’s evaluation scored Homogeneity Resistance at 3 out of 5, the lowest dimension in the story assessment. The evaluator noted that “the narrator’s digressive voice — the self-interrupting, qualifying, circling habit — is beautifully rendered but applied with too little variation across the full story.” The macro-rhythm of scene-then-digression became predictable. Qualifying constructions — “and I think,” “but I must,” “for there is a kind of” — recurred with a density that created uniform texture across passages that should have felt different from one another.

This is the homogeneity problem in action. The pipeline captured MacDonald’s most distinctive narrative mannerism — the intrusive, self-correcting, perpetually-qualifying narrator — and then applied it with a regularity that MacDonald himself would not have. MacDonald digresses, but he also writes long stretches of uninterrupted narrative. He qualifies, but he also makes direct statements. The pipeline found the pattern and held it too tightly.

The agent engineering literature identifies four architectural responses to the homogeneity problem. First, inject controlled variation through rotating reference passages — different MacDonald excerpts anchoring different generation steps, so the model’s statistical center shifts across scenes. Second, vary generation temperature by scene type — higher temperature for passages requiring tonal surprise, lower for passages requiring careful thematic threading. Third, target ranges rather than fixed values for stylistic parameters — not “mean sentence length 17.7 words” but “sentence length varying between 8 and 35 words with a mean near 18.” Fourth, subtractive editing — a dedicated review pass whose function is to cut the generic and the safe, the passages that sound competent but unsurprising. This last intervention is necessarily human-directed, because identifying what is “safe” requires the aesthetic judgment that defines Tier 3.

The homogeneity score of 3/5 is honest evidence that these mitigations help but do not solve the problem. The pipeline’s output is substantially more varied than naive single-prompt generation. It is not yet as varied as MacDonald’s own prose. Whether this gap can be closed through architectural refinement or whether it marks a boundary of current capability is an open question the project cannot definitively answer.

How the Taxonomy Shaped the Pipeline

The taxonomy is not a finding that sits in a research document and gets cited in articles. It is the architectural blueprint that determined how every component of the pipeline was designed.

Agent role assignment. The pipeline’s agents are assigned to tiers. The writer agent operates primarily at Tier 1 and Tier 2 — it generates prose using a detailed voice specification that encodes MacDonald’s surface features (diction, syntax, punctuation patterns, register) and structural patterns (imagery systems, thematic preoccupations, narrative stance). The evaluation agents assess Tier 1 features computationally (stylometric comparison against the corpus baseline) and Tier 2 features through LLM-as-judge rubrics. Tier 3 features — worldview integration, narrative timing, the overall arc of meaning — are assessed through human direction at every significant creative decision point. The taxonomy dictates not just what the agents do, but what they are not asked to do. No agent is tasked with generating worldview. Worldview is provided by the human director through creative seeds, outline review, and beat-level evaluation gates.

The two-layer voice specification. The voice specification — the document that instructs the writer agent on how to produce MacDonald-like prose — has two layers, organized by the taxonomy. The surface layer specifies measurable features: vocabulary distributions, sentence-length targets, syntactic preferences, register parameters, punctuation patterns. These are Tier 1 elements, checked computationally. The depth layer specifies thematic and tonal parameters: MacDonald’s theological imagery systems, his narrative stance, his fairy-tale logic, the specific symbolic vocabulary of light and shadow and water and growing things. These are Tier 2 and Tier 3 elements, generated by the writer agent but evaluated through LLM rubrics and human review.

The voice spec’s two-layer architecture reflects a specific finding from cognitive science. Saffran et al. (1996) and Bock and Griffin (2000) demonstrated that style operates through implicit statistical learning — readers absorb patterns through exposure, not through explicit rule-following. The surface layer of the voice spec works the way explicit instruction works: follow these rules, hit these targets. The depth layer works the way reference passages work: absorb this material, internalize these patterns, produce text that resonates with these examples. The voice spec is not a set of rules. It is two sets of instructions operating through different cognitive mechanisms, one systematic and one mimetic.

Evaluator weights. The evaluation rubric scores passages across six dimensions: Worldview, Narrative Logic, Thematic Consistency, Emotional Register, Voice, and Surface Features. Each dimension maps to a tier. Surface Features (Tier 1) carries weight 1. Voice and Emotional Register (Tier 2) carry weight 3. Narrative Logic and Thematic Consistency (bridging Tier 2 and Tier 3) carry weight 4. Worldview (Tier 3) carries weight 6. The weights encode the faithfulness hierarchy directly: a passage’s score is dominated by the dimensions that matter most to readers and that are hardest to automate. A passage that nails the vocabulary and sentence structure but misses the theological substrate receives a low composite score. A passage that captures the worldview but has imperfect surface features receives a high one.

The story’s final evaluation scores demonstrate the weights in action. Worldview scored 5/5. Narrative Logic scored 5/5. Thematic Consistency scored 5/5. These are the Tier 3 and high-Tier 2 dimensions — the ones the pipeline was architected to prioritize through human direction at every structural decision point. Emotional Register scored 4/5, Voice scored 4/5, and Surface Features scored 4/5. The evaluator noted that the voice was “slightly over-concentrated” — it had absorbed MacDonald’s most distinctive mannerism and applied it more uniformly than he would. This is a Tier 2 problem: the pattern was captured, but the variation was not. The weighted composite — 4.55/5 — reflects the architectural bet that depth matters more than surface. Whether that bet produces prose that readers experience as faithful is a question only readers can answer.

Human direction as first-order concern. Agent engineering, creative intelligence, and implementation strategy converge on a finding that sounds obvious but has non-obvious architectural consequences: human creative direction is not an overhead cost to be minimized. It is an irreducible investment that determines whether the pipeline produces a directed creative artifact or an approved one.

The Creative Intelligence research identified six categories of irreducible human contribution: problem-finding (deciding what story to tell), aesthetic selection (choosing between alternatives the machine generates), meaning assignment (determining what the story is about), emotional calibration (ensuring the emotional register matches the target), diversity enforcement (breaking the homogeneity pattern), and voice authenticity judgment (the final assessment of whether the prose sounds alive). Each of these maps to Tier 3. None is delegable to an agent. The pipeline budgets human attention at every significant creative decision point — seed review, outline gates, beat-level evaluation, full-story assessment — not because the agents need supervision but because the dimensions that matter most require a kind of judgment that agents structurally lack.

The director model — the architectural pattern where a human director provides vision, steers at intermediate checkpoints, and holds subtractive authority — is validated across creative industries. Film post-production, music production, game design: the pattern recurs wherever creative output requires coordination of multiple competencies under a unified aesthetic vision. The cross-domain synthesis validates it from five specific requirements: vision articulation before generation begins, intermediate steering at checkpoints, the right to redirect or discard, creative seed material that establishes the work’s thematic DNA, and subtractive authority — the right to cut what is competent but unnecessary. The pipeline implements all five. This is not project management. It is architecture.

The Honest Gap

The three-tier taxonomy is presented throughout this collection as a practical engineering guide. It tells you where to invest automation, where to invest collaboration, and where to invest human direction. It informed every architectural decision in the pipeline, and the pipeline’s output — evaluated across nine dimensions with honest scores — suggests the taxonomy’s priorities produce better results than the surface-first alternative.

But the taxonomy carries an implicit claim that deserves scrutiny: that the boundary between tiers is structural rather than temporary.

The philosophical arguments for a permanent boundary are substantial. Polanyi’s tacit knowledge framework holds that certain forms of knowing are inherently resistant to explicit articulation — they are demonstrated through practice, not captured in rules. Kant’s account of aesthetic judgment posits that beauty is recognized through a faculty that cannot be reduced to algorithmic procedure. Bourdieu’s concept of habitus — the deep, embodied dispositions that shape perception and taste — describes exactly the kind of knowledge that Tier 3 depends on. These are not empirical claims about current model capabilities. They are philosophical claims about the nature of certain kinds of knowledge, and they have survived centuries of scrutiny.

The empirical counterargument is also substantial. The history of artificial intelligence is a history of “impossible” becoming “routine.” Speech recognition, image classification, natural language generation, game playing — each was once cited as evidence of a permanent human-machine boundary, and each fell. GPT-5 showed measurably higher stylistic diversity than earlier models. The Twain exception — 62% detection versus the 96.4% average — demonstrates that the boundary is not uniform across authors. The GRPO fine-tuning results on Twain imitation, while measured only through automated metrics whose correlation with human judgment ranges from 0.04 to 0.43 Pearson, are at least suggestive that targeted training can narrow the gap for specific voices.

The honest answer is that the taxonomy reflects 2025-2026 LLM capabilities. It is a practical guide with a shelf life. Whether the Tier 3 boundary represents a permanent limit of statistical language models or a temporary capability gap that architecture and training will erode is a question the project cannot answer and the field has not resolved. The philosophical arguments for permanence are more durable than the empirical arguments for either position, but philosophy has been wrong about computational limits before.

What the project can say is this: in 2026, designing a creative AI pipeline as though all voice dimensions were equally automatable produces worse results than designing one that recognizes the three-tier structure and allocates resources accordingly. Whether that will still be true in 2028 is a bet, not a finding.

Return to the comparison that opened this piece. Sanderson succeeded not because he was a better writer than Brian Herbert and Kevin J. Anderson — that is a separate argument — but because he invested his effort in the right tier. He spent eighteen months absorbing Jordan’s worldview before writing a word of prose. He understood that the readers who had spent twenty years inside the Wheel of Time would notice if the theological and philosophical substrate was wrong, even if every proper noun and political faction was correct. He designed his approach around the faithfulness hierarchy, depth before surface, without calling it that.

The Dune prequels invested their effort in the wrong tier. The sandworms were there. The spice was there. The Atreides and the Harkonnens were there. The surface was meticulous. But Frank Herbert’s philosophical patience — his willingness to let a dinner-party scene carry more weight than a battle, his commitment to showing how power corrodes the people who wield it rather than celebrating the people who seize it — was replaced by something faster, louder, and emptier. Surface without depth. Tier 1 without Tier 3.

A creative AI pipeline faces the same choice, except the naive path — surface first — is also the default path. LLMs generate Tier 1 features reliably. The first output of any generation step will have plausible vocabulary, reasonable sentence structure, and approximate register. The temptation is to accept this as a starting point and refine from there. The taxonomy says: that starting point is the least important part of the output. The real work is in the tiers above it — in the imagery systems that cohere across scenes, in the thematic logic that holds over thousands of words, in the worldview that makes the fictional world feel like a place with its own physics rather than a setting decorated onto a plot.

Every design decision in this pipeline — the evaluator weights, the two-layer voice spec, the human direction gates, the diversity enforcement mechanisms, the architectural commitment to hierarchical generation — flows from the taxonomy’s central claim: that depth is harder than surface, more important than surface, and requires fundamentally different engineering than surface. The story the pipeline produced scored 5/5 on Worldview and 3/5 on Homogeneity Resistance. That gap is the taxonomy working as designed. The depth was prioritized. The surface variation was not yet solved. The pipeline knows which problem is which, and it allocates its resources accordingly.

Whether the gap between 3/5 and 5/5 can be closed by better architecture, or whether it marks the boundary where engineering ends and something else begins, is the question this collection will return to. For now, the taxonomy does what a good engineering guide should do: it tells you where your effort will produce returns and where it will produce diminishing ones, and it is honest about the limits of its own permanence.